Nothing Works Anymore

An underrated fly in the ointment for a lot of big plans.

Nothing Works Anymore is an idea that’s come up from time to time without much comment. It’s not a scientific claim. It’s rhetorical. One of those loose patterns that gets noticed as a tendency, then gets more and more common. We see examples all over, from transportation and logistics delays to undeliverable military systems to massive fraudulent program bloat to the internet dark age to crumbling infrastructure to illiterate grads to ineffective online services to reproductive collapse, and so on. Everywhere you turn - the parcel delivery chatbot can’t even process your question. The wood screws strip or shear. Right on up to the derivatives time bomb.

When people notice, the common question is why doesn’t anyone do something? Individually, the issues are big but seem easily dealt with. Use better materials. Forego a percent or two and use first-language call centers. Fire deadwood. Don’t market software that doesn’t work. Hold miscreants accountable. And so on. Yet nothing gets fixed. Problems mount. Until whatever redundancy there was can no longer hide the decay. It appears the accumulation of problems as a group has properties of its own. Beyond the sum of the parts.

Assured Supplies, Rusty Wheel, surreal art print

Start by defining the concept. It’s not literally nothing works - this post was uploaded and is showing on a screen. The term is hyperbolic rhetoric that catches a state of accepted failure. It’s one of the reasons I keep on about organic sociability and personal responsibility. I’m not a guide, but plans should have levels so that they can respond to different levels of disruption. There’s a big difference between retiring to fresher air and generating your own power with a wood gasifier.

The reality is disruption is already starting. The non-clickbaity commentariat is laying out the consequences of the energy and fertilizer disruption. Even a quick end to the conflict can’t replace the lost production now working through the system. And, given that a quick end means Iran basically wins, there will be some kind of change to that US-Gulf State petro-dollar reserve-currency system. Can’t say what - I suspect major, but who knows? Only that the post-war setup will be different. And less advantageous to the US position.

Mateusz Urbanowicz, Art vs. Entropy, 2020

There’s a real range of outcomes, including some pretty awful ones. The more your group or network can mitigate, the better off you’ll be. This is personal level, though. On a system level, there are serious implications for the original Script read. That was a mass-level thing, involving NPC direction and internal influence. There were obvious problems as well. We’ve seen commitment to the House of Lies, which we called, and then the Zio-cuck faction, which we didn’t derail the needful. But I also noted the clash between the techno-luciferian matrix and nothing works anymore a few times. Even called it the existential conflict of our era.

This comes together with some urgency in managed outcomes for a degenerate population. Readers know the Paste Train is my term for the humane end of the beast plan for degraded masses. High-protein insect slurry in some form and a secure pod with personalized AI entertainment. Not something I dwell on because the reality-facing aren’t interested. Beyond recognizing it as something to avoid.

The first issue is the autonomous reliability of AI. Utopic and dystopic projections both assume AI runs things perfectly - what differs is the control ethos. Nothing works anymore cuts across Matrix or Federation. The problem is what the technology is built on. I have more to say on that once the Ontological Hierarchy book is done. For now, picture the total aggregate “code base” that makes up the beast system. All the layers of institutions and information. The abstract theories and algorithms in and out of computers. Centralized maintenance and support. Brittle, JIT, redundancy-free complexity. That’s the training data and physical infrastructure.

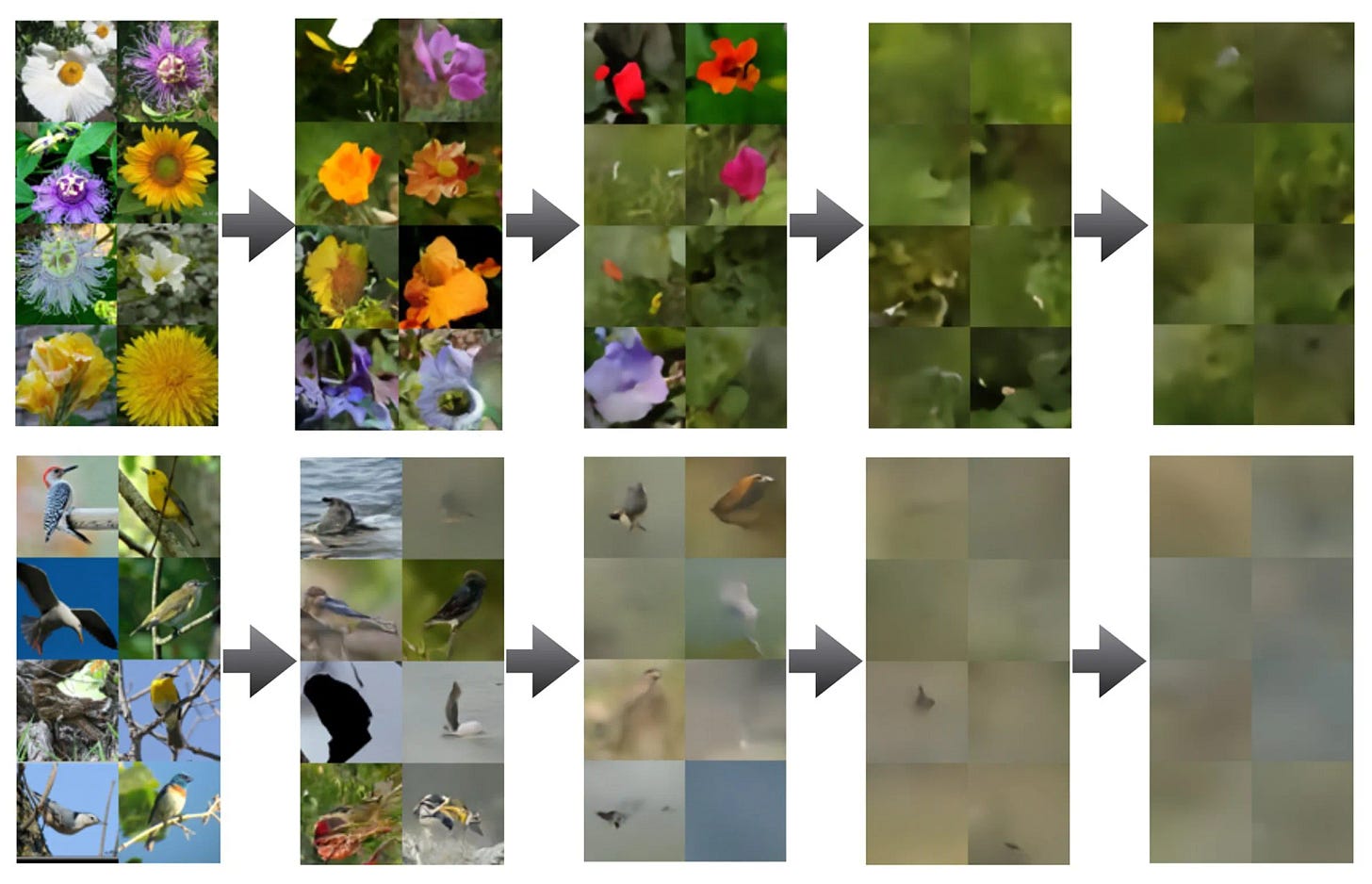

Nothing Works Anymore looks a lot like systemic entropy in whatever we can call that foundation. Affecting digital and physical systems in different ways. Algorithmic Decay hits AI directly. The math and logic that the algorithms are built on are abstractly perfectly precise, infinitely extensible, and replicable. But output degrades, individually and systemically. In the first case, it comes from micro-errors compounding with each translation into mathematical latent space.

The second is a recursive trap from training with AI-generated data, where creative range vanishes. You can see it in how an AI image diffuses to noise as it iterates. Unless this can be solved, AI systems can’t be left to run alone.

Infrastructure brittleness affects a lot of systems. Global supply chains, logistics, energy grids, and software stacks are interdependent and optimized for efficiency. Failure in one disrupts others. The “cloud” relies on physical servers and real energy. Handing off to AI isn’t a positive shift. Epistemic Collapse is the human counterpart. When tools and methods of knowing are unreliable, the system can’t self-diagnose or self-correct. Everything from SJWism to the reproducibility crisis fits here.

Wingsdomain Art and Photography, Robot Workers At A Sweatshop After the Great Dystopian Collapse of 2050, photograph

Does AI just blend into this infrastructure and turn everything “smart” and frictionless? Or does it add new points of unpredictability and failure? The Techno-Luciferian vision assumes a stable, predictable, high-trust substrate. The last A in MAGA. But Nothing Works Anymore is substrate-level rot. On all the levels. bureaucratic, logistical, informational, and social.

Surreal Machines: The insulting machine

My read has been that The Script planned on using AI to stabilize and manage the decay. But unattended AI systems also decay. And they’re very complex - they need a functioning, high-maintenance world that’s reality-grounded. Handing control to AI to fix things pulls further away from grounded truth and enhances the problem. That’s the basic doom loop no one wants.

Personalized Claude is an impressive technology. It’ll make things possible for me that weren’t before. But it can’t see outside its frame. It needs someone to keep it between the lines. And it is not an engineering problem. It’s entropy. High-speed iterating makes it worse by increasing the number of doom loop cycles per unit of time.

Not hard to see why organic, personal, and localized are constant recommendations. But this is looking at the macro level. Everyone’s circumstances are different. What I can see anecdotally indicates the vast majority aren’t thinking about their long-term survival. NPCs can’t. They need to follow someone’s plan, and the screens aren’t helping here. We’re all in the same boat - most people we know are NPCs. If you’re FTS-1 - regardless of status - taking responsibility may be the best thing you can do for those you care about.

Paul Kirchner, Awaiting the Collapse, Survivors

So what about the macro level? Readers know we’re in the event horizon of systemic collapse, and what’s next isn’t formed. Prediction is throwing blind darts. Some might hit, but there’s no way to know which beforehand. Being driven out of the ME while damaging everyone there furthers autarkic imperialism. Iran looks to be the winner here, but it will be burning time and resources rebuilding short-term. Whatever is left of the Gulf States and Israel likewise. Knocks that area off the board as an energy competitor while reorientation happens. It’s just being handled so badly that it seems like self-sabotage—something to watch.

Gale Stockwell, Parkville, Main Street, 1934, oil on canvas, Smithsonian American Art Museum

There is a link between an autarkic direction and nothing works. Shortening logistics chains and reducing external dependencies reduces potential failure points. But resilient local systems aren’t a dude in the fields. The same knowledge and skills are needed that are decaying in the macro. Hence the organic socializing. Start now, when it’s still relatively doable, and you can collectively move and develop as times shift.

The paste train needs mechanisms that work. If logistics fail, so does the train. UBI or digital credit-based distribution depends on financial and logistical networks working.

House of Lies techno-luciferians have to deliver. Right now, AI is propping up the economy, leading to downstream concerns. I’m just looking at the claims vs. the IRL mass experience. It’s probably easy to ignore when it’s a screen hallucinating a footnote or glitching a picture. What it would probably take to register would be a tangible system failure because of AI entropy. An autonomous power grid or payment system shutting down without anyone clearly knowing why.

A few things to watch for, not to obsess over or change, but for planning and timing. A major real-world hallucination event that disrupts supply chains, algorithmic trading, or medical processes. Significant reorientation of budget priorities to maintenance and repair. Any meaningful analog revival movements. Softer claims around AI futures in the narrative. There are more, but that gives an idea. It’s a vague landscape. Look for signs that the threat is recognized.

At root, Nothing Works Anymore is fallen, entropic material reality eroding Scripted dreams of digital utopia. The AI meant to solve decay is a product and potential accelerator of that decay. It looks like a race to what gives way first. House of Lies narrative, the AI hype bubble, or a social order that finds nothing off about a “leader” spewing deranged, contradictory walls of Gamma all-caps lies. Or said walls being part of massive Team Golem profiteering. Maybe it all implodes at once.

Until then, watch for consolidated incompetence effects. Major projects that aren’t cutting edge that never work at all. Not just orders of magnitude over budget or late. That’s Nothing Works Anymore hitting a next level - Cali high-speed rail being a perfect example.

That’s long enough. I’ll cycle back around to this theme with more implications and opportunities if it proves interesting. There is a connectivity between all this. The political stuff may be nonsense, but it’s the real landscape we’re stuck with. How things fracture depends on it. Socialization and organic culture may seem unserious, but it’s beyond essential. The microcosm is shock when someone is banned. The House of Lies created an illusion of false inclusivity. It weaponizes the low status and NPCs. Real human relations aren’t like that.

The whole point of trying to hew practical is to anchor the abstract ruminating to the physical world. Thought experiments are fun and can be clarifying. But there’s way too much [if I can imagine it, I can get defensive arguing for it]. That self-indulgence is the luxury of the r-selected mouse utopia. And it’s near the end of its sell-by date. For the last decade, the Band has repeated this advice calmly and without doom. It’s time to turn up the urgency a little. It’s time for extropy.

The picture of awakening at the end, and not a pleasant one.

Note the quiet underlining of the cat under the table, playing with the dying bird.

It gets really bad when you realizd AI logic is trained by semi-literate Aftican subcontractors.

A more advanced one is a slow labor of love. Working on small scale tests myself.

Literally cant be worse.